联系我们

18591797788

hubin@rlctech.com

北京市海淀区中关村南大街乙12号院天作国际B座1708室

18681942657

lvyuan@rlctech.com

上海市浦东新区商城路660号乐凯大厦26c-1

18049488781

xieyi@rlctech.com

广州市越秀区东风东路华宫大厦808号1608房

029-81109312

service@rlctech.com

西安市高新区天谷七路996号西安国家数字出版基地C座501

不少小伙伴在OCP从4.3.3升级到4.3.4后遇到过一个典型问题:主机性能监控正常,但数据库监控数据不显示。今天结合真实生产案例,把根因、排查步骤、解决方案一次性讲透。

【适用场景】4.3.4-20250217152357

产品 :OceanBean OCP

版本 :4.3.4-20250217152357

问题类型 : ocp监控数据不显示

问题描述:数据库监控数据不显示,但主机性能显示

OCP日志

2025-03-20 15:58:56.117 INFO 8 --- [metric-parse-36,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457535, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:58:56.117 INFO 8 --- [metric-parse-7,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457535, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:59:01.117 INFO 8 --- [metric-parse-39,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457540, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:59:01.117 INFO 8 --- [metric-parse-25,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457540, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:59:01.117 INFO 8 --- [metric-parse-38,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457540, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:59:01.117 INFO 8 --- [metric-parse-25,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457540, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:59:06.117 INFO 8 --- [metric-parse-13,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457545, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:59:06.117 INFO 8 --- [metric-parse-4,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457545, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

2025-03-20 15:59:06.117 INFO 8 --- [metric-parse-1,,] c.o.o.m.s.OcpMetricCollectServiceImpl : Collect failed, exporter=http://***.***.***.***:62889/metrics/ob/basic, collectAt=1742457545, message=java.util.concurrent.TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms, rootCause=TimeoutException: Read timeout to ***.***.***.***/***.***.***.***:62889 after 1000 ms

monagent.log 日志

2025-03-20T15:44:11.47386+08:00 ERROR [3771761,] caller=file/file.go:305:func1: prevent panic by handling failure accessing a path "/home/admin/oceanbase/log/observer.log.wf.20250320124759159" fields:, error="lstat /home/admin/oceanbase/log/observer.log.wf.20250320124759159: no such file or directory"

2025-03-20T15:44:11.47394+08:00 ERROR [3771761,] caller=logtailer/log_tailer_executor.go:360:getWatchedNewLogs: findFilesAndSortByMTime error fields: error="lstat /home/admin/oceanbase/log/observer.log.wf.20250320124759159: no such file or directory"

2025-03-20T15:44:11.47398+08:00 ERROR [3771761,] caller=logtailer/log_tailer_executor.go:317:handleWatchedNewLogs: getLogsWithinTime failed fields: error="lstat /home/admin/oceanbase/log/observer.log.wf.20250320124759159: no such file or directory", tailConf="{/home/admin/oceanbase/log observer.log 500ms observer ob_light}"

2025-03-20T15:44:11.47403+08:00 ERROR [3771761,] caller=logtailer/log_tailer_executor.go:275:WatchFile: getWatchedNewLogs failed fields: error="lstat /home/admin/oceanbase/log/observer.log.wf.20250320124759159: no such file or directory"

2025-03-20T15:44:11.47405+08:00 ERROR [3771761,] caller=logtailer/log_tailer_executor.go:416:func2: handleWatchedNewLogs failed fields:, fileRealPath=/home/admin/oceanbase/log/observer.log, error="lstat /home/admin/oceanbase/log/observer.log.wf.20250320124759159: no such file or directory"

2025-03-20T15:44:11.88563+08:00 ERROR [3771761,959b39ccf5497d6a] caller=engine/route_manager.go:210:ServeHTTP: failed to write http response from buffer fields:, error="write tcp ***.***.***.***:62889->***.***.***.***:13658: write: broken pipe"

2025-03-20T15:44:13.46777+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3783989 fields: error="open /proc/3783989/status: no such file or directory"

2025-03-20T15:44:18.93651+08:00 ERROR [3771761,4ad07496701825c7] caller=engine/route_manager.go:210:ServeHTTP: failed to write http response from buffer fields:, error="write tcp ***.***.***.***:62889->***.***.***.***:13672: write: broken pipe"

2025-03-20T15:44:19.65054+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3784066 fields:, error="open /proc/3784066/status: no such file or directory"

2025-03-20T15:44:20.36105+08:00 ERROR [3771761,87f70b5eb0a0d972] caller=engine/route_manager.go:210:ServeHTTP: failed to write http response from buffer fields:, error="write tcp ***.***.***.***:62889->***.***.***.***:18264: write: broken pipe"

2025-03-20T15:44:20.83329+08:00 WARN [3771761,a39a4d26710acfc6] caller=mysql/table_input.go:411:collectData: failed to do collect with sql SELECT tenant_id, SVR_IP, SVR_PORT, count(*) as cnt FROM CDB_OB_TABLE_LOCATIONS WHERE TABLE_ID > 500000 GROUP BY TENANT_ID, SVR_IP, SVR_PORT, args:[], err: Error 4012 (HY000): Timeout, query has reached the maximum query timeout: 10000000(us), maybe you can adjust the session variable ob_query_timeout or query_timeout hint, and try again.

2025-03-20T15:44:20.83332+08:00 WARN [3771761,a39a4d26710acfc6] caller=common/input_cache.go:47:Update: update cache for key partition_replicas, err: Error 4012 (HY000): Timeout, query has reached the maximum query timeout: 10000000(us), maybe you can adjust the session variable ob_query_timeout or query_timeout hint, and try again.

2025-03-20T15:44:21.25993+08:00 WARN [3771761,a39a4d26710acfc6] caller=mysql/table_input.go:411:collectData: failed to do collect with sql SELECT tenant_id, svr_ip, svr_port, count(*) as cnt FROM CDB_OB_TABLE_LOCATIONS WHERE ROLE = 'leader' AND TABLE_ID > 500000 GROUP BY TENANT_ID, SVR_IP, SVR_PORT, args:[], err: Error 4012 (HY000): Timeout, query has reached the maximum query timeout: 10000000(us), maybe you can adjust the session variable ob_query_timeout or query_timeout hint, and try again.

2025-03-20T15:44:21.25997+08:00 WARN [3771761,a39a4d26710acfc6] caller=common/input_cache.go:47:Update: update cache for key primary_leaders, err: Error 4012 (HY000): Timeout, query has reached the maximum query timeout: 10000000(us), maybe you can adjust the session variable ob_query_timeout or query_timeout hint, and try again.

2025-03-20T15:44:21.27496+08:00 WARN [3771761,a39a4d26710acfc6] caller=mysql/table_input.go:411:collectData: failed to do collect with sql select t1.tenant_id, t1.database_name, t3.object_id as database_id, sum(t2.data_size) as data_size, sum(t2.required_size) as required_size from (select tenant_id, database_name, table_id, tablet_id from cdb_ob_table_locations group by tenant_id, database_name, tablet_id) t1 left join (select tenant_id, tablet_id, svr_ip, svr_port, data_size, required_size from cdb_ob_tablet_replicas) t2 on t1.tenant_id = t2.tenant_id and t1.tablet_id = t2.tablet_id left join (select con_id, object_name ,object_id from CDB_OBJECTS where object_type = 'DATABASE') t3 on t1.tenant_id = t3.con_id and t1.database_name = t3.object_name group by t1.tenant_id, t1.database_name, args:[], err: Error 4012 (HY000): Timeout, query has reached the maximum query timeout: 10000000(us), maybe you can adjust the session variable ob_query_timeout or query_timeout hint, and try again.

2025-03-20T15:44:21.275+08:00 WARN [3771761,a39a4d26710acfc6] caller=common/input_cache.go:47:Update: update cache for key ob_database_disk, err: Error 4012 (HY000): Timeout, query has reached the maximum query timeout: 10000000(us), maybe you can adjust the session variable ob_query_timeout or query_timeout hint, and try again.

2025-03-20T15:44:21.50663+08:00 ERROR [3771761,7768ac2b966034ca] caller=engine/route_manager.go:210:ServeHTTP: failed to write http response from buffer fields: error="write tcp ***.***.***.***:62889->***.***.***.***:30458: write: broken pipe"

2025-03-20T15:44:27.37246+08:00 ERROR [3771761,bb0ad60200776dd4] caller=engine/route_manager.go:210:ServeHTTP: failed to write http response from buffer fields:, error="write tcp ***.***.***.***:62889->***.***.***.***:18258: write: broken pipe"

2025-03-20T15:44:28.90846+08:00 WARN [3771761,] caller=oceanbase/sql_audit_merge.go:482:collectRawMsgsByTenant: {Code:15004 ShortError:collect.sql.audit.input.request.id.jump}, Details: tenantId: 1086 minRequestId 6920157 is larger than startRequestId 6920150, maybe lost 7 data

2025-03-20T15:44:31.71+08:00 ERROR [3771761,f47b434be1437f47] caller=engine/route_manager.go:210:ServeHTTP: failed to write http response from buffer fields: error="write tcp ***.***.***.***:62889->***.***.***.***:55444: write: broken pipe"

2025-03-20T15:44:33.61048+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3784291 fields:, error="open /proc/3784291/status: no such file or directory"

2025-03-20T15:44:33.61058+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3784293 fields: error="open /proc/3784293/status: no such file or directory"

2025-03-20T15:44:38.09484+08:00 ERROR [3771761,955b7f8a906d2507] caller=engine/route_manager.go:210:ServeHTTP: failed to write http response from buffer fields:, error="write tcp ***.***.***.***:62889->***.***.***.***:55448: write: broken pipe"

2025-03-20T15:44:38.33458+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3784400 fields:, error="open /proc/3784400/status: no such file or directory"

2025-03-20T15:44:38.33465+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3784401 fields: error="open /proc/3784401/status: no such file or directory"

2025-03-20T15:44:38.3347+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3784402 fields:, error="open /proc/3784402/status: no such file or directory"

2025-03-20T15:44:38.33474+08:00 WARN [3771761,] caller=common/process.go:106:refreshProcesses: failed to get process name of pid: 3784403 fields:, error="open /proc/3784403/status: no such file or directory"

看上面ocp.log中打印的为ocp-server读超时,出现该报错原因是agent采集数据量太多,CPU不够用导致。

可通过以下命令查看对应接口返回的数据量与时间

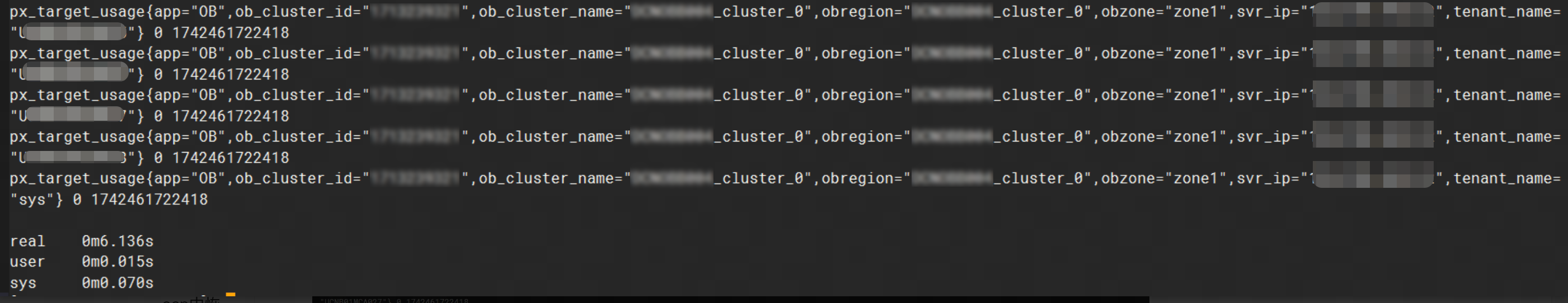

time sudo curl --unix-socket /home/admin/ocp_agent/run/ocp_monagent.$(cat /home/admin/ocp_agent/run/ocp_monagent.pid).sock http://unix-socket-server/metrics/ob/basic

如果返回时间在5s内可调整meta租户的ocp库的ocp.monitor.collect.request.timeout

其中时间可以调整,但最大支持5s,该场景返回时间为6s,调整该参数无效。

update config_properties set value = '{"second_connect_timeout":1000,"second_read_timeout":3000,"second_request_timeout":10,"minute_connect_timeout":10000,"minute_read_timeout":30000,"minute_request_timeout":40000}' where `key` like "ocp.monitor.collect.request.timeout";

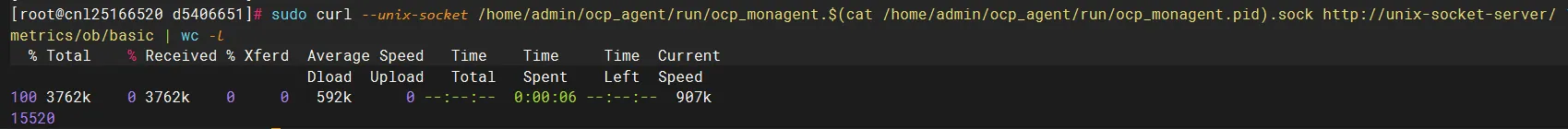

sudo curl --unix-socket /home/admin/ocp_agent/run/ocp_monagent.$(cat /home/admin/ocp_agent/run/ocp_monagent.pid).sock http://unix-socket-server/metrics/ob/basic | wc -l

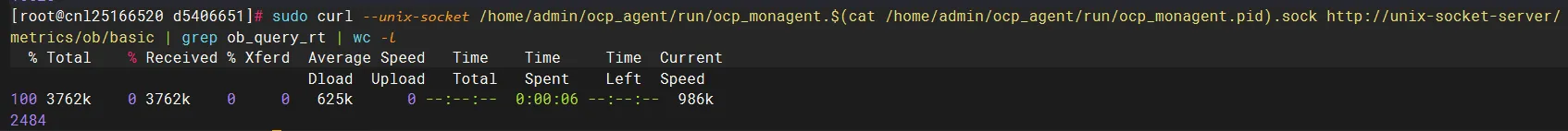

sudo curl --unix-socket /home/admin/ocp_agent/run/ocp_monagent.$(cat /home/admin/ocp_agent/run/ocp_monagent.pid).sock http://unix-socket-server/metrics/ob/basic | grep ob_query_rt | wc -l

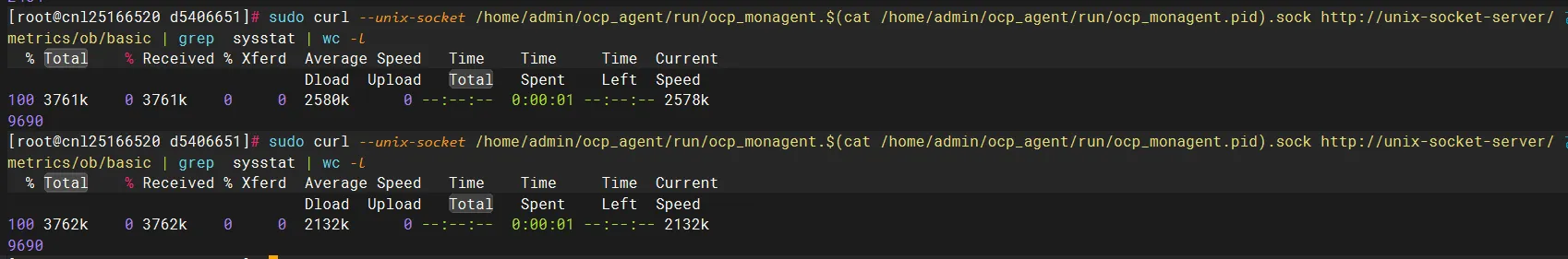

sudo curl --unix-socket /home/admin/ocp_agent/run/ocp_monagent.$(cat /home/admin/ocp_agent/run/ocp_monagent.pid).sock http://unix-socket-server/metrics/ob/basic | grep sysstat | wc -l

看了这几个返回的数据之后发现采集的数据量较大,可能因CPU资源不足导致

查看了CPU的配置

- key: monagent.limit.cpu.quota

value: "1.0"

valueType: string

encrypted: false

configVersion: "2025-02-17T16:11:20+08:00"

看到配了1C

让客户尝试配置2C试下

但在后续调整至2C和4C后

耗时突然涨到1m多

整体看下来还是由于CPU资源不足导致超时,但在435之前版本无法调整agent的CPU数量,仅能调整内存,需升级至435版本,在ocp白屏-运维配置中可修改monagent.limit.cpu.quota

以上就是 OceanBase OCP从4.3.3升级到4.3.4后监控数据不显示的排查过程与解决方案。如果你在 OceanBase 运维中遇到类似的疑难报错,可以在评论区留言讨论。后续我们会持续分享更多实用技术干货,下次见~